Master projects

Here you can find all our available master projects.

Open Projects (4)

-

Large Models for Robotic Manipulation in Healthcare: Dual- Arm Large model performance in Real-World Deployments

ObjectiveThe goal of this project is to train, deploy and evaluate embodied multimodal (large) models to perform dual-arm manipulation tasks relevant to healthcare assistance. The student will work towards a demonstration task. In the work leading up to the demonstration, the student will train …

Bram Grooten

More info

Bram Grooten

More info Joaquin Vanschoren

Joaquin Vanschoren

-

Building an AI avatar

This project is for Dutch-speaking students only, since it requires working with large amounts of Dutch data and requires Dutch cultural knowledge.This project aims to create an AI avatar that is trained to act like a well-known Dutch entertainer. It is to be trained …

More info Joaquin Vanschoren

Joaquin Vanschoren

-

Learning to Optimize at Scale with Attention Mechanisms

Continual learning refers to the ability of a system to continually acquire new knowledge over time while retaining previously learned experience [1]. Conventional neural networks typically update all model parameters (weights) when adapting to new tasks, which often leads to catastrophic forgetting [2]. Instead, …

Joaquin Vanschoren

More info

Joaquin Vanschoren

More info Anna Vettoruzzo

Anna Vettoruzzo

-

From Tables to Pixels: Adapting Tabular Foundation Models for Vision Tasks

Foundation models have recently demonstrated remarkable capabilities across a wide range of domains by learning from large-scale data and generalizing to novel, unseen tasks without the need for fine-tuning. This generalization ability is primarily enabled by their capacity for in-context learning, which is the …

Joaquin Vanschoren

More info

Joaquin Vanschoren

More info Anna Vettoruzzo

Anna Vettoruzzo

Assigned Projects

No currently assigned Projects.

Finished Projects (43)

-

Enhancing Real-World Imitation Learning with Reinforcement LearningSep 2025

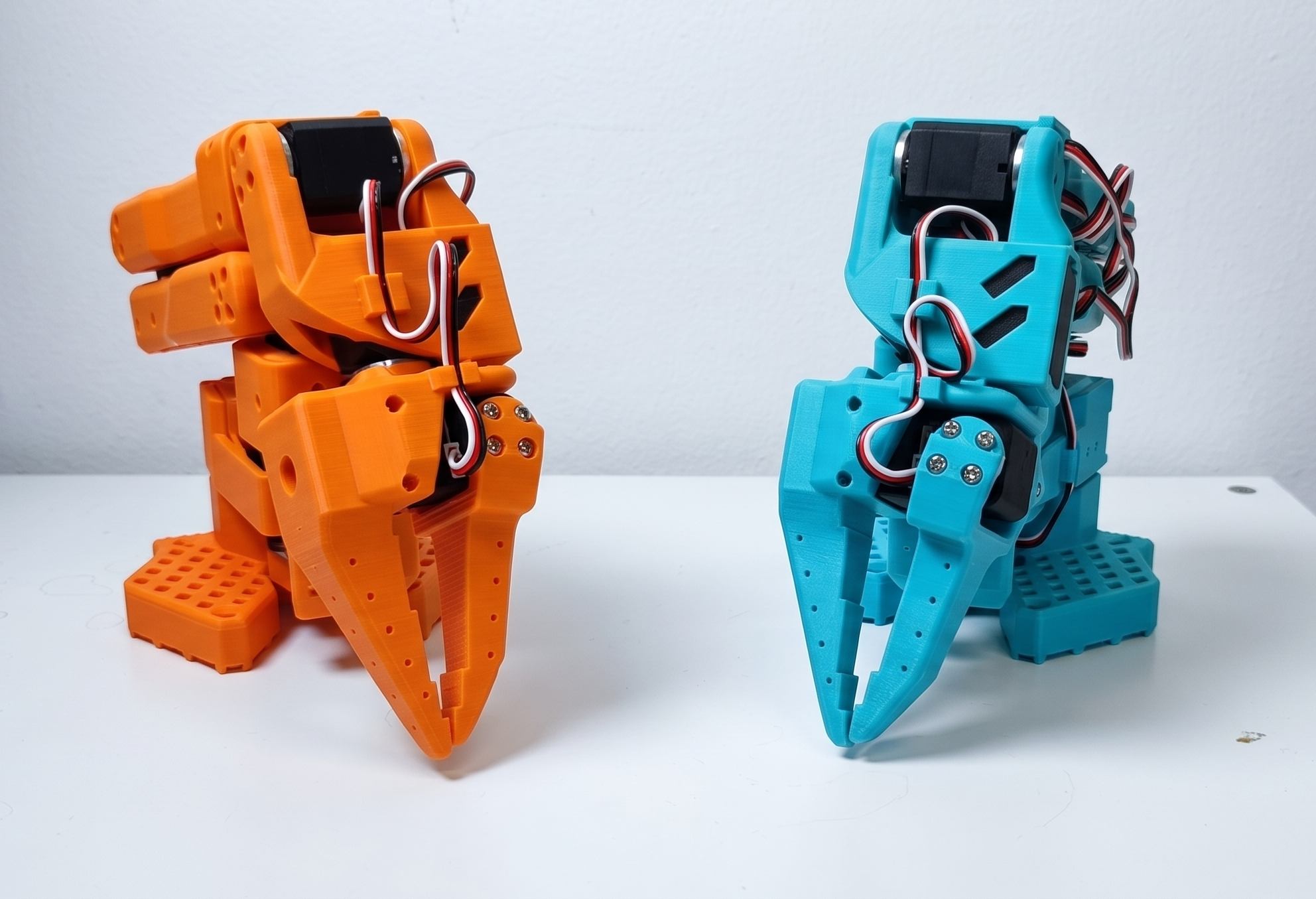

This TU/e master project is setup in collaboration with a robotics start-up in Eindhoven.Company OverviewTeleOperation Services is an innovative company based in Woensel-Noord, Eindhoven. Our cutting-edge AI-driven system empowers robotic arms to imitate tasks and perform them independently with human-like finesse and speed. Through …

QBQuint Bakens Bram Grooten

More info

Bram Grooten

More info Thiago Simão

Thiago Simão