Project: Large Models for Robotic Manipulation in Healthcare: Dual- Arm Large model performance in Real-World Deployments

Description

Objective

The goal of this project is to train, deploy and evaluate embodied multimodal (large) models to perform dual-arm manipulation tasks relevant to healthcare assistance. The student will work towards a demonstration task. In the work leading up to the demonstration, the student will train different large models and evaluate & benchmark the performance using different sensor modalities.

Demonstration task: A representative task involves preparing trays or work surfaces by arranging items in specific positions—such as for a scheduled examination, monitoring, or care routine. The items to be placed on the tray can come from fixed locations. A human demonstrates this in this video around the 1:52 mark. In hospitals these food trays are put together based on the order of a patient. The robot would have taken items from boxes in fixed locations and put them on the tray for the patient.

Key Techniques

This project targets the integration and application of multimodal large models in robotic manipulation tasks, with relevance to healthcare-assistance scenarios. Multimodal large models — vision-language-action (VLA) models, vision-language models (VLMs) and vision-action (VA) models— enable robots to interpret instructions, perceive via multiple sensory modalities, and map those inputs into actionable motor behavior.

Figure AI gives a good demonstration of what these models are capable of, in their ‘Scaling Helix’ article, or their more recent full body autonomy demonstration. Their model is not open-source, however a lot of similar models are. RT2 is a VLA model developed by DeepMind that co-fine-tunes a VLM on both web-scale vision/language data and robot trajectory data, thereby enabling the model to output robotic actions directly from image + instruction inputs. Another example is GR00T N1 (from NVIDIA), which employs a dual-system architecture: a vision-language reasoning module and a dedicated action module for motor control, enabling manipulation across different embodiments and modalities.

One of the key factors that determine the performance of these models are the right sensory inputs. Force sensors on the gripper tips, in the joints, depth cameras, multiple cameras, and sensor data augmentation all contribute to the quality of the data that the model receives and directly impact the model understanding of the world and ultimately the performance.

To compare the differences in performance, a benchmark needs to be established, on which the different sensor modalities can be tested across different models. The LeRobot software stack is a great starting point, as it contains both ready-to use implementations of the models, as well as an interface to the LeRobot hardware which makes real-world testing a lot more accessible.

The student is encouraged to test: different sensor types, sensor locations, sensor data preprocessing, data augmentation techniques, (pre-)training methods, model architecture changes, number of training samples, or hardware changes that may increase performance.

Project Phases

Phase 1: Preparation

Goal: Prepare and plan the project. Preliminary literature research resulting in a plan for a benchmark, models, pre-processing steps, and sensor types that will be evaluated.

Phase 2 – Baseline

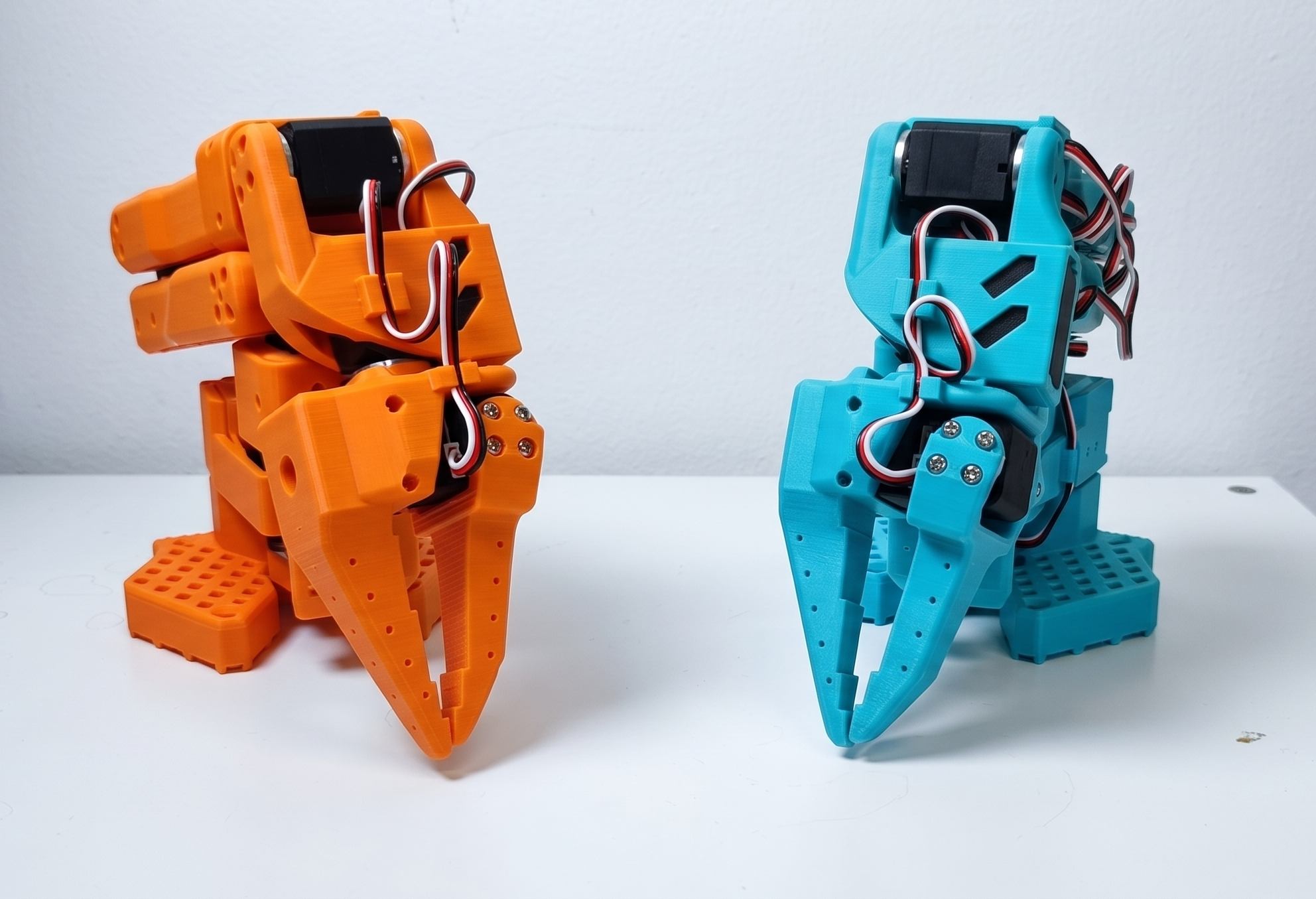

Goal: Use, train/finetune the baseline models on the LeRobot platform, using the SO101 dual robot arm setup. Get familiar with training strategies and develop an understanding of the baseline. Creation of a physical benchmark pick & place / grasping scene which could be representative of a healthcare environment.

Phase 3 – Implementing & testing on dual-arm hardware

Goal: Research the different sensor modalities and techniques to improve performance. Develop hypotheses that can be tested using the aforementioned benchmark. In this phase there is room for experimentation and iteration to maximize the task performance. Unseq will support / facilitate sensor integrations or other hardware changes to the platform.

Phase 4 – Demonstration on Healthcare task

Goal: A final demonstration of the best performing large model & sensor modality as a showcase demonstration.

Expected Outcomes

- A benchmark in a representative small-scale environment for healthcare-oriented manipulation

- An evaluation of at least 3 large multimodal models (such as VLA, VA) across the SO101 hardware on this benchmark

- Implementation of at least 1 force sensor and 1 non-standard vision sensor modality and the resulting impact on performance

- Showcase with the best performing sensor/model combination

- Final technical report describing the hypotheses, tests and conclusions

- Clearly documented and reusable code

Company contact

Unseq B.V.

Jan-Pieter Rijstenbil (CTO)

jp@unseq.com

Details

- Supervisor

-

Bram Grooten

Bram Grooten

- Secondary supervisor

-

Joaquin Vanschoren

Joaquin Vanschoren

- External location

- Unseq B.V.

- Interested?

- Get in contact