Project: (External: PwC) "Where does this number come from?": Enterprise Data Lineage using LLMs and Knowledge Graphs

Description

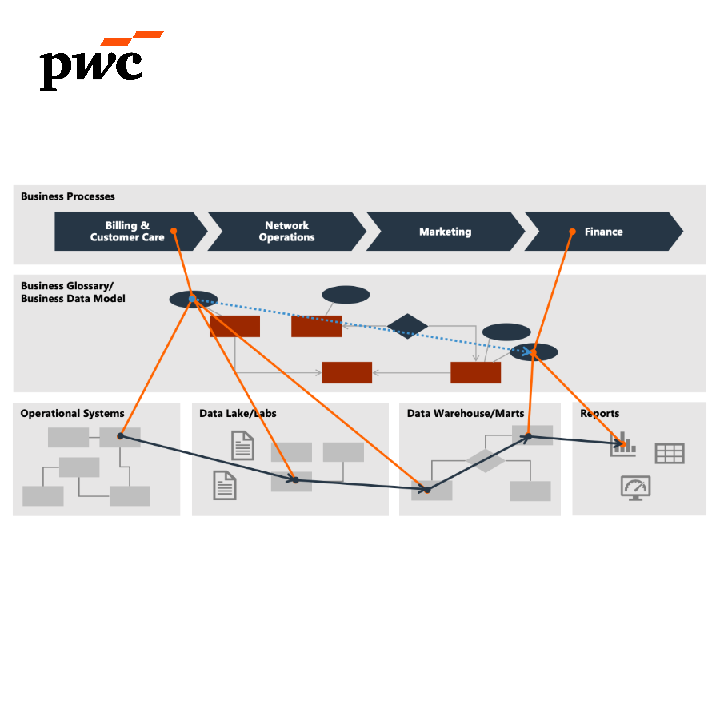

In Financial and ESG reporting, we see that it takes a lot of time to create insights into data lineage of the reports that companies publish on their Financial, Environmental, Social and Governmental related KPIs. Quality, transparency and integrity would be supported if we can do a quick scan based on data pipelines through a stack of multiple enterprise systems.

LLMs have the potential to make data more accessible to a non-technical audience through prompt-based analytics. It also has the potential to help make engineering and audit teams more efficient by quickly getting an overview of an existing data pipeline.

Both of these applications hinge on appropriate tagging and management of data:

• If we ask the LLM to plot quarterly sales for product X, how will the LLM know which sales field to pick if it is not appropriately tagged in the metadata layer?

• The same basic principle applies in an engineering context, but more complex (e.g., anonymization policies, variety of ingest, compute, and storage/publish options, etc.)

• For this, you could provide the LLM with a bunch of data engineering policies and principles that your company follows so that it builds pipelines according to your approach.

• How do you set your metadata up to be able to leverage these new capabilities? How do you still build in a fail-safe / undo / human intervention step in the process?

The project

Based on underlying metadata and transformation scripts (e.g. SQL), create an approach to automatically detect data lineage. This as a fundamental building block for setting up a good data management process.

The project starts with already performed work on developing a knowledge graph to map the interaction between enterprise information systems (e.g. Salesforce to MySQL to PowerBI) on column-based-level. The project is to be extended by 1) automatically developing such knowledge graphs and to 2) improve the user interaction / querying the knowledge graph by natural language.

Details

- Supervisor

-

Bart Engelen

Bart Engelen

- External location

- PwC

- Interested?

- Get in contact