Project: Leveraging LLMs to create multimodal explanations

Description

AI is currently being used in a wide range of applications. However, most AI systems operate as a black box, meaning that it is hard to understand how an AI system comes to its predictions. Explainable AI (XAI) is a research field that tries to open this black box.

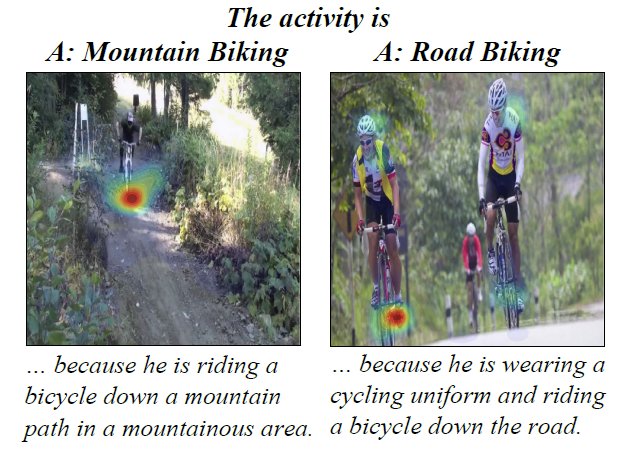

Many XAI tools already exist. In vision use cases, Grad-CAM [1] is a widely used method which overlays an input image with a heatmap showing the areas that were relevant for a prediction. A limitation of Grad-CAM is that it only gives a heatmap, it does not state what could be seen in this heatmap or why a certain area is important. For instance, is it the texture, shape, or color that is important for a given class prediction? This research tries to combine Grad-CAM with textual explanations to generate multimodal explanations to improve the quality of the overall explanation.

For instance, [2] creates a multimodal explanation model. The tasks performed by the original models are activity recognition (predicting which activity can be seen on an image, see Figure 1) and visual question answering (given a question and an image, predict an answer). The goal of the explanation model is to explain the original model. The multimodal explanation model generates a multimodal (heatmap and text) explanation given the input and output of the original model.

Figure 1: multimodal explanations from the activity recognition task, from [2]

[1] Selvaraju, R. R., Das, A., Vedantam, R., Cogswell, M., Parikh, D., & Batra, D. (2016). Grad-CAM: Why did you say that?. arXiv preprint arXiv:1611.07450.

[2] Park, D. H., Hendricks, L. A., Akata, Z., Rohrbach, A., Schiele, B., Darrell, T., & Rohrbach, M. (2018). Multimodal explanations: Justifying decisions and pointing to the evidence. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 8779-8788).

Details

- Supervisor

-

Sibylle Hess

Sibylle Hess

- Secondary supervisor

-

WMWil Michiels